SQL TRANSFORMATION :

You can pass the database connection information to the SQL transformation as input data at run time. The transformation processes external SQL scripts or SQL queries that you create in an SQL editor. The SQL transformation processes the query and returns rows and database errors.

When you create an SQL transformation, you configure the following options:

Mode:-The SQL transformation runs in one of the following modes:

An SQL transformation running in script mode runs SQL scripts from text files. You pass each script file name from the source to the SQL transformation Script Name port. The script file name contains the complete path to the script file.

When you configure the transformation to run in script mode, you create a passive transformation. The transformation returns one row for each input row. The output row contains results of the query and any database error.

Rules and Guidelines for Script Mode

Use the following rules and guidelines for an SQL transformation that runs in script mode:

Use the following rules and guidelines when you configure the SQL transformation to run in query mode:

After you create the SQL transformation, you can define ports and set attributes in the following transformation tabs:

Properties Tab

Configure the SQL transformation general properties on the Properties tab. Some transformation properties do not apply to the SQL transformation or are not configurable.

The following table describes the SQL transformation properties:

Create Mapping :

Step 1: Creating a flat file and importing the source from the flat file.

Step 2: Importing the target and applying the transformation.

In the same way as specified above go to the targets->import from file and select an empty notepad under the name targetforbikes (this is one more blank notepad which we should create and save under the above specified name in the C :\).

Step 3: Design the work flow and run it.

Step 4: Preview the output data on the target table.

You can pass the database connection information to the SQL transformation as input data at run time. The transformation processes external SQL scripts or SQL queries that you create in an SQL editor. The SQL transformation processes the query and returns rows and database errors.

When you create an SQL transformation, you configure the following options:

Mode:-The SQL transformation runs in one of the following modes:

- Script mode. The SQL transformation runs ANSI SQL scripts that are externally located. You pass a script name to the transformation with each input row. The SQL transformation outputs one row for each input row.

- Query mode. The SQL transformation executes a query that you define in a query editor. You can pass strings or parameters to the query to define dynamic queries or change the selection parameters. You can output multiple rows when the query has a SELECT statement.

- Passive or active transformation. The SQL transformation is an active transformation by default. You can configure it as a passive transformation when you create the transformation.

- Database type. The type of database the SQL transformation connects to.

- Connection type. Pass database connection information to the SQL transformation or use a connection object.

An SQL transformation running in script mode runs SQL scripts from text files. You pass each script file name from the source to the SQL transformation Script Name port. The script file name contains the complete path to the script file.

When you configure the transformation to run in script mode, you create a passive transformation. The transformation returns one row for each input row. The output row contains results of the query and any database error.

Rules and Guidelines for Script Mode

Use the following rules and guidelines for an SQL transformation that runs in script mode:

- You can use a static or dynamic database connection with script mode.

- To include multiple query statements in a script, you can separate them with a semicolon.

- You can use mapping variables or parameters in the script file name.

- The script code page defaults to the locale of the operating system. You can change the locale of the script.

- The script file must be accessible by the Integration Service. The Integration Service must have read permissions on the directory that contains the script.

- The Integration Service ignores the output of any SELECT statement you include in the SQL script. The SQL transformation in script mode does not output more than one row of data for each input row.

- You cannot use scripting languages such as Oracle PL/SQL or Microsoft/Sybase T-SQL in the script.

- You cannot use nested scripts where the SQL script calls another SQL script.

- A script cannot accept run-time arguments.

- When you configure the SQL transformation to run in query mode, you create an active transformation.

- When an SQL transformation runs in query mode, it executes an SQL query that you define in the transformation.

- You pass strings or parameters to the query from the transformation input ports to change the query statement or the query data.

- Static SQL query. The query statement does not change, but you can use query parameters to change the data. The Integration Service prepares the query once and runs the query for all input rows.

- Dynamic SQL query. You can change the query statements and the data. The Integration Service prepares a query for each input row.

Use the following rules and guidelines when you configure the SQL transformation to run in query mode:

- The number and the order of the output ports must match the number and order of the fields in the query SELECT clause.

- The native data type of an output port in the transformation must match the data type of the corresponding column in the database. The Integration Service generates a row error when the data types do not match.

- When the SQL query contains an INSERT, UPDATE, or DELETE clause, the transformation returns data to the SQL Error port, the pass-through ports, and the Num Rows Affected port when it is enabled. If you add output ports the ports receive NULL data values.

- When the SQL query contains a SELECT statement and the transformation has a pass-through port, the transformation returns data to the pass-through port whether or not the query returns database data. The SQL transformation returns a row with NULL data in the output ports.

- You cannot add the "_output" suffix to output port names that you create.

- You cannot use the pass-through port to return data from a SELECT query.

- When the number of output ports is more than the number of columns in the SELECT clause, the extra ports receive a NULL value.

- When the number of output ports is less than the number of columns in the SELECT clause, the Integration Service generates a row error.

- You can use string substitution instead of parameter binding in a query. However, the input ports must be string data types.

After you create the SQL transformation, you can define ports and set attributes in the following transformation tabs:

- Ports. Displays the transformation ports and attributes that you create on the SQL Ports tab.

- Properties. SQL transformation general properties.

- SQL Settings. Attributes unique to the SQL transformation.

- SQL Ports. SQL transformation ports and attributes.

Properties Tab

Configure the SQL transformation general properties on the Properties tab. Some transformation properties do not apply to the SQL transformation or are not configurable.

The following table describes the SQL transformation properties:

| Property | Description |

| Run Time Location | Enter a path relative to the Integration Service node that runs the SQL transformation session. If this property is blank, the Integration Service uses the environment variable defined on the Integration Service node to locate the DLL or shared library. You must copy all DLLs or shared libraries to the run-time location or to the environment variable defined on the Integration Service node. The Integration Service fails to load the procedure when it cannot locate the DLL, shared library, or a referenced file. |

| Tracing Level | Sets the amount of detail included in the session log when you run a session containing this transformation. When you configure the SQL transformation tracing level to Verbose Data, the Integration Service writes each SQL query it prepares to the session log. |

| Is Partition able | Multiple partitions in a pipeline can use this transformation. Use the following options: - No. The transformation cannot be partitioned. The transformation and other transformations in the same pipeline are limited to one partition. You might choose No if the transformation processes all the input data together, such as data cleansing. - Locally. The transformation can be partitioned, but the Integration Service must run all partitions in the pipeline on the same node. Choose Locally when different partitions of the transformation must share objects in memory. - Across Grid. The transformation can be partitioned, and the Integration Service can distribute each partition to different nodes. Default is No. |

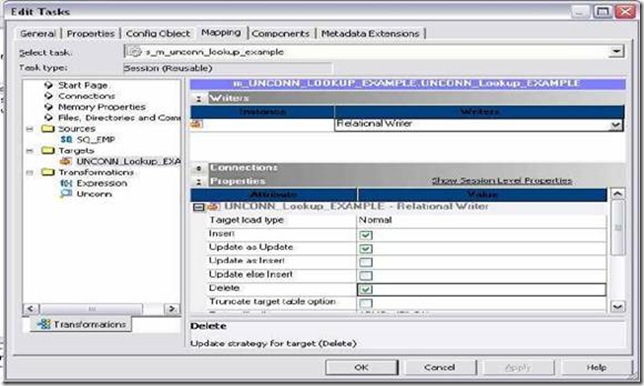

| Update Strategy | The transformation defines the update strategy for output rows. You can enable this property for query mode SQL transformations. Default is disabled. |

| Transformation Scope | The method in which the Integration Service applies the transformation logic to incoming data. Use the following options: - Row - Transaction - All Input Set transaction scope to transaction when you use transaction control in static query mode. Default is Row for script mode transformations.Default is All Input for query mode transformations. |

| Output is Repeatable | Indicates if the order of the output data is consistent between session runs. - Never. The order of the output data is inconsistent between session runs. - Based On Input Order. The output order is consistent between session runs when the input data order is consistent between session runs. - Always. The order of the output data is consistent between session runs even if the order of the input data is inconsistent between session runs. Default is Never. |

| Generate Transaction | The

transformation generates transaction rows. Enable this property for

query mode SQL transformations that commit data in an SQL query. Default is disabled. |

| Requires Single Thread Per Partition | Indicates if the Integration Service processes each partition of a procedure with one thread. |

| Output is Deterministic | The

transformation generate consistent output data between session runs.

Enable this property to perform recovery on sessions that use this

transformation. Default is enabled. |

Create Mapping :

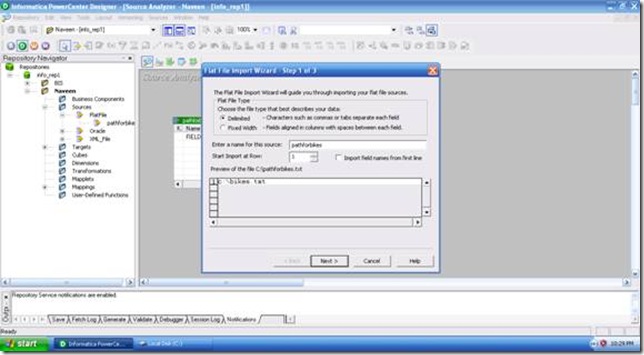

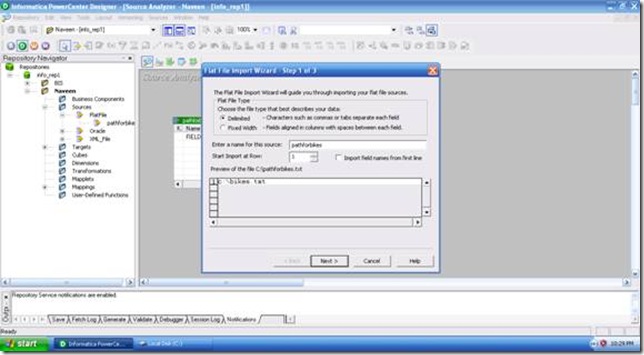

Step 1: Creating a flat file and importing the source from the flat file.

- Create a Notepad and in it create a table by name bikes with three columns and three records in it.

- Create one more notepad and name it as path for the bikes. Inside the Notepad just type in (C:\bikes.txt) and save it.

- Import the source (second notepad) using the source->import from the file. After which we are goanna get a wizard with three subsequent windows and follow the on screen instructions to complete the process of importing the source.

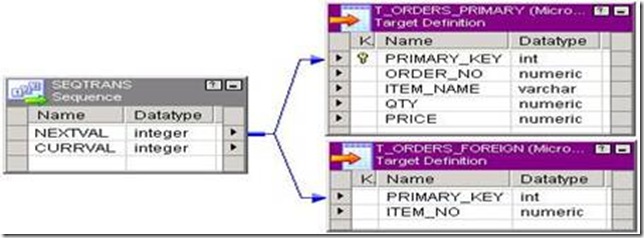

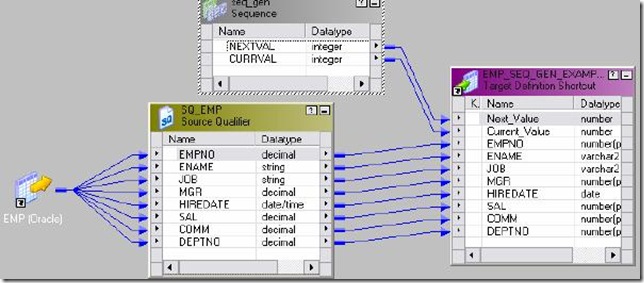

Step 2: Importing the target and applying the transformation.

In the same way as specified above go to the targets->import from file and select an empty notepad under the name targetforbikes (this is one more blank notepad which we should create and save under the above specified name in the C :\).

- Create two columns in the target table under the name report and error.

- We are all set here. Now apply the SQL transformation.

- In the first window when you apply the SQL transformation we should select the script mode.

- Connect the SQ to the ScriptName under inputs and connect the other two fields to the output correspondingly.

Step 3: Design the work flow and run it.

- Create the task and the work flow using the naming conventions.

- Go to the mappings tab and click on the Source on the left hand pane to specify the path for the output file.

Step 4: Preview the output data on the target table.

![clip_image002[5] clip_image002[5]](http://lh3.ggpht.com/_MbhSjEtmzI8/Ta-ZuyaEoGI/AAAAAAAAAXc/KGywh3UwMhU/clip_image002%5B5%5D_thumb%5B1%5D.jpg?imgmax=800)

![clip_image002[6] clip_image002[6]](http://lh4.ggpht.com/_MbhSjEtmzI8/Ta8dMUGfUXI/AAAAAAAAAUU/XFLZbF843-Y/clip_image002%5B6%5D_thumb%5B2%5D.jpg?imgmax=800)

No comments:

Post a Comment